Earlier this week, I was tuning my PC’s RAM settings, and entered “unstable territory”, which caused cold reboots immediately after login, and again during boot. After turning back the dial to the previously stable configuration, I figured it’d be good to go: But no. Now my Windows install was in an infinite “Startup & Repair” boot loop.

I therefore present to you the list of steps I went through, in case any of the steps are helpful to you. I restarted after each of the attempted fixes below:

- Attempted fix 1: Use the go-to “Startup Repair” option. This told me it couldn’t fix the problem on my PC. I also attempted this from a freshly created Windows 10 installation USB stick, to no avail.

- Attempted fix 2: Command prompt, “chkdsk c:” – this found no problems with the disk. Also ran “chkdsk c: /offlinescanandfix” with the same result (although much faster).

- Attempted fix 3: “sfc /scannow /OFFBOOTDIR=c:\ /OFFWINDIR=c:\Windows” – no errors found. (It initially failed to run because it failed to start service. After repeated attempts, also manually starting the service which failed, I had to exit the command prompt and start it again, and re-issue the command, to successfully run.)

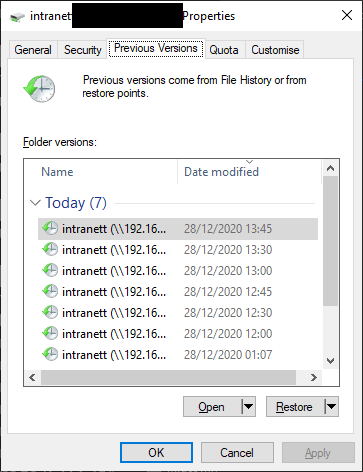

- Attempted fix 4: System Restore. This failed spectacularly as it didn’t know where the Windows install was, and recommended I reboot and try again. I did – no avail. Attempted to run it manually in Command Prompt (“rstrui.exe /OFFLINE:C:\Windows” – according to this thread) and it would let me select restore points. However, it failed to restore a desktop.ini file, and therefore aborted the whole restore procedure. Go figure.

- Attempted fix 5: Correcting the boot loader with the “BootRec” utility.

> c:

> cd c:\Windows\System32

> BootRec.exe /FixMbr

(OK)

> BootRec.exe /FixBoot

(OK)

>BootRec.exe /RebuildBCD

(Failed to add file which already exists)

- Attempted fix 6: Correcting the boot loader by killing it with fire:

> c:

> cd c:\Windows\System32

> Diskpart

(Now entered the Diskpart utility)

>> List disk

(Only one disk)

>> Sel disk 0

>> List vol

(Three volumes. 0 was c:, 1 was recovery partition, 2 was UEFI FAT32 partition)

>> Sel vol 4

>> assign letter=V:

>> Exit

(Back in normal shell)

>V:

> format v: /FS:FAT32

(Opted yes to the prompt which warned me this was the end of the world)

> c:

> cd c:\Windows\System32

> bcdboot C:\windows /s V: /f UEFI

(Opted yes to the prompt which warned me this was the end of the world again - sorry folks, it's my fault)

> exit

And now, back to the welcome screen which previously only listed “Advanced Options” and “Shutdown PC” – it now listed an option for continuing to boot my PC. It successfully booted – and I now wonder, why didn’t the automated attempts of fixing the boot process detect the EFI partition was broken?

I guess Windows break easily, is why.